Consumers are amassing large personal collections of digital photographs. Often, photographers wish to organize these collections according to events such as vacations or family gatherings. Events are difficult to quantify either in terms of temporal duration or statistics of low-level image features. We present unsupervised methods for event-based clustering based on timestamps and image content that partition photo sets into contiguous subsets in time order. Algorithms and evaluation results are summarized in [Cooper et al., 2005] and [Cooper, 2011].

|

digital photo organization:

|

|

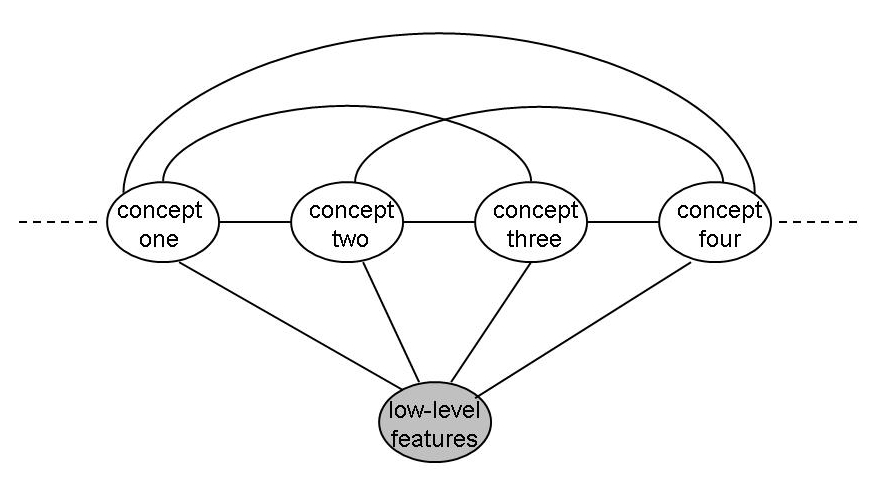

combining search-based and model-based image annotation:

Much of the work in automatic image annotation can be divided into two gross classes: search-based methods and per-label models. For scalability to large collections, search based methods typically rely on feature-based similarity and ranking exemplified by nearest neighbors search. For high accuracy annotation, per-label discriminative models are used, but these can scale poorly to large collections and label vocabularies. [Cooper, 2009] combines the scalability of approximate nearest neighbors search with the accuracy lightweight per-tag modeling. |

|

video annotation using random fields:

This work used variously structured conditional random fields to model inter-label and label-feature dependence structures for media annotation. These models also integrate high performance discriminative single concept detectors for collective multi-label annotation. Details and experimental results appear in [Cooper, 2007]. |

|

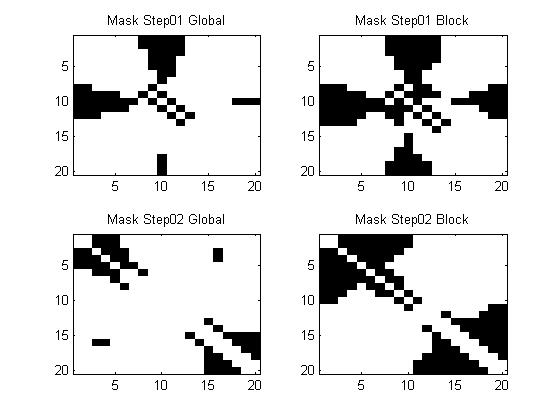

video segmentation via classification:

For the shot boundary detection task of TRECVID, we designed a system for video segmentation combining inter-frame similarity analysis with supervised classification. We first build a partial inter-frame similarity matrix to quantify local inter-frame similarity. We then use an efficient exact k-nearest-neighbor classifier to determine which frames are cut or gradual shot boundaries as documented in [Cooper, et al., 2007]. |

|

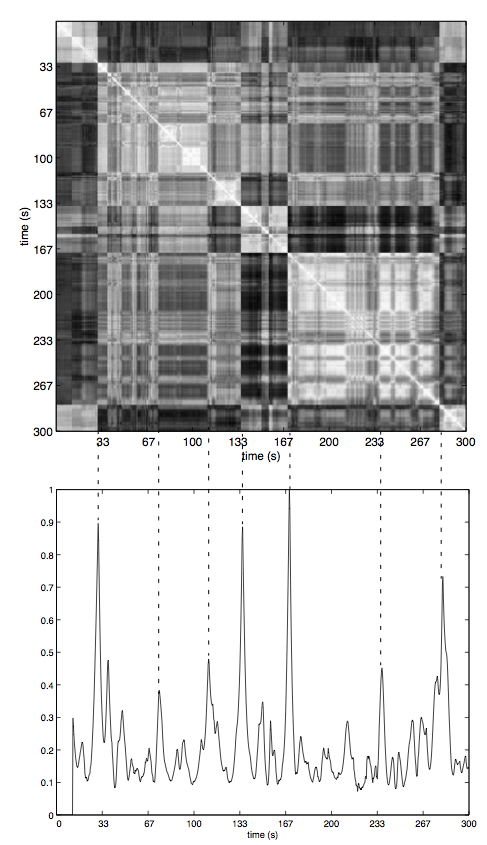

structural analysis of popular music:

We have been exploring the application of spectral clustering to music summarization. Music "thumbnails" are useful to enhance browsing of audio collections or to serve as proxies to improve music information retrieval. We take a hierarchical approach, first segmenting the audio into its main structural components. In the case of popular music, these components are commonly verse and chorus segments. We then compute an inter-segment similarity matrix and its SVD to cluster the segments, and can select a summary using any number of criteria. More details are in [Cooper and Foote, 2003] and [Foote and Cooper, 2003]. |

|

automatic music video creation:

In this work, we automatically align and synchronize selected video excerpts to an arbitrary soundtrack. The analysis automatically aligns visual shot boundaries with local changes int he source audio and is documented in [Foote, Cooper, and Girgensohn, 2002]. |